Installing KubeVirt on Kubernetes

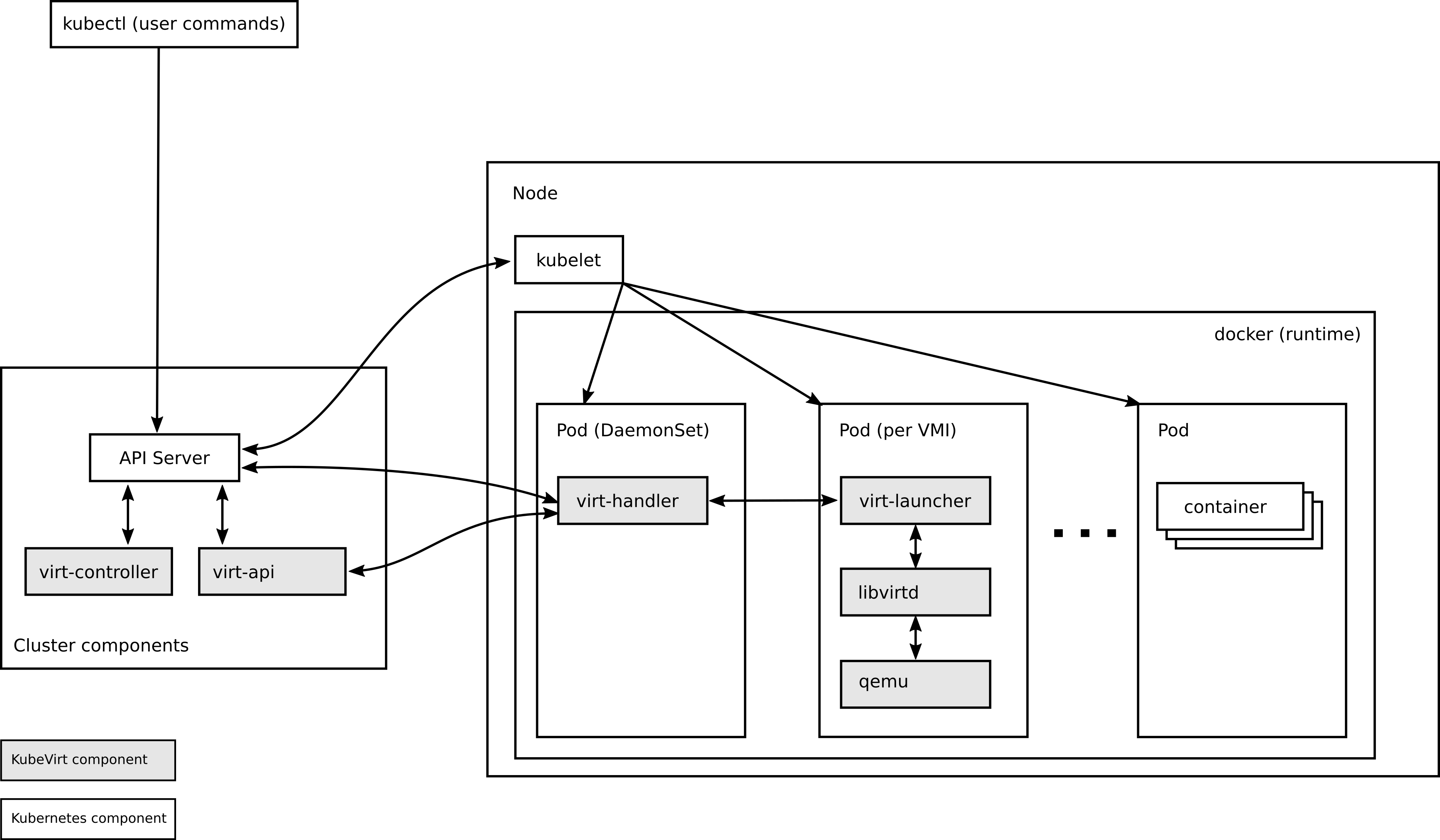

Architecture

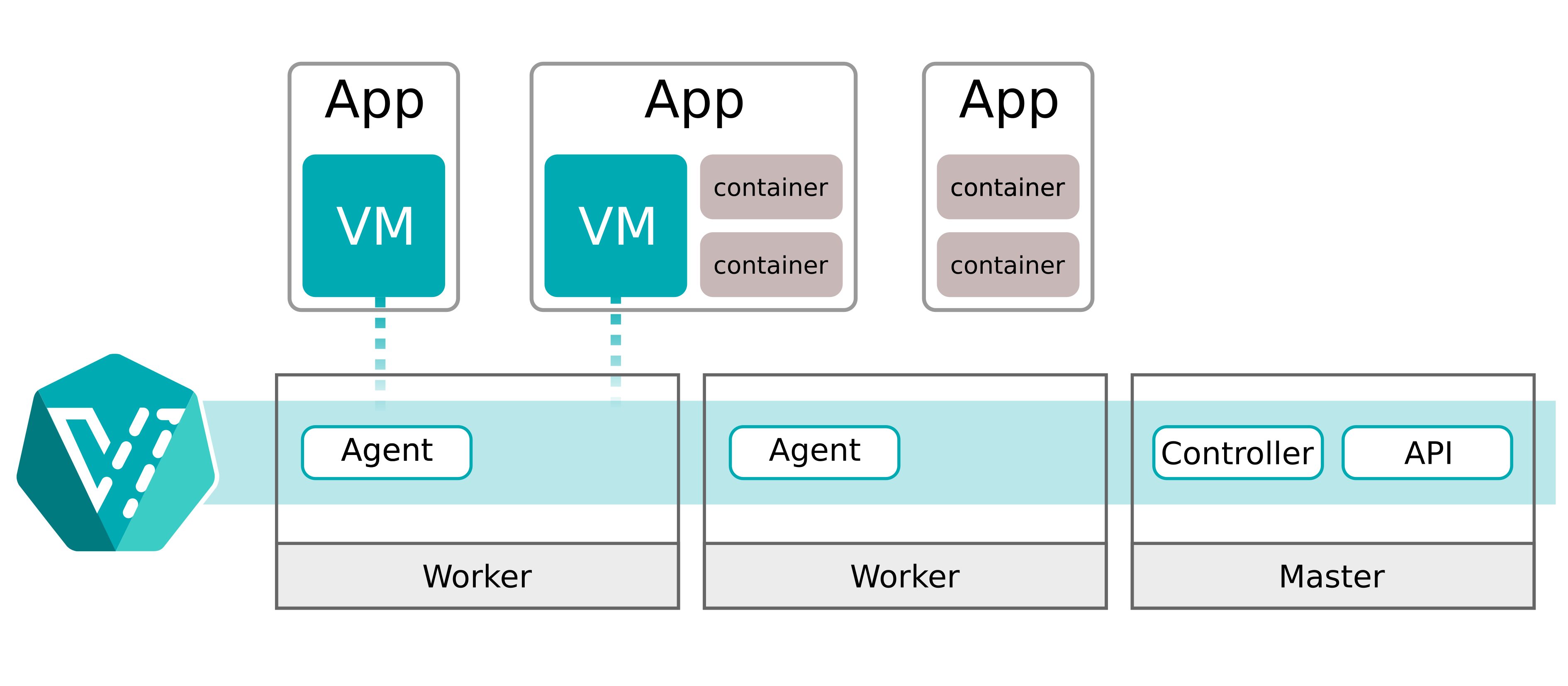

A simplified version

libvirt

环境检查

[root@k8s-master ~]# virt-host-validate qemu

-bash: virt-host-validate: command not found

[root@k8s-master ~]#

安装virt-host-validate

yum -y install libvirt

环境检查

[root@k8s-master ~]# virt-host-validate qemu

QEMU: Checking for hardware virtualization : PASS

QEMU: Checking if device /dev/kvm exists : PASS

QEMU: Checking if device /dev/kvm is accessible : PASS

QEMU: Checking if device /dev/vhost-net exists : PASS

QEMU: Checking if device /dev/net/tun exists : PASS

QEMU: Checking for cgroup 'cpu' controller support : PASS

QEMU: Checking for cgroup 'cpuacct' controller support : PASS

QEMU: Checking for cgroup 'cpuset' controller support : PASS

QEMU: Checking for cgroup 'memory' controller support : PASS

QEMU: Checking for cgroup 'devices' controller support : PASS

QEMU: Checking for cgroup 'blkio' controller support : PASS

QEMU: Checking for device assignment IOMMU support : WARN (No ACPI DMAR table found, IOMMU either disabled in BIOS or not supported by this hardware platform)

QEMU: Checking for secure guest support : WARN (Unknown if this platform has Secure Guest support)

[root@k8s-master ~]#

部署KubeVirt

- 下载yaml文件,并新增

useEmulation: true - 部署yaml文件,并等待Running状态。

配置kubevirt-cr

useEmulation: true新增参数

[root@k8s-master ~]# cat kubevirt-cr.yaml

---

apiVersion: kubevirt.io/v1

kind: KubeVirt

metadata:

name: kubevirt

namespace: kubevirt

spec:

certificateRotateStrategy: {}

configuration:

developerConfiguration:

useEmulation: true

featureGates: []

customizeComponents: {}

imagePullPolicy: IfNotPresent

infra:

workloadUpdateStrategy: {}

[root@k8s-master ~]#

部署KubeVirt

- export RELEASE=$(curl https://storage.googleapis.com/kubevirt-prow/release/kubevirt/kubevirt/stable.txt)

- kubectl apply -f kubevirt-operator.yaml

- kubectl apply -f kubevirt-cr.yaml

[root@k8s-master ~]# export RELEASE=$(curl https://storage.googleapis.com/kubevirt-prow/release/kubevirt/kubevirt/stable.txt)

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 8 100 8 0 0 10 0 --:--:-- --:--:-- --:--:-- 10

[root@k8s-master ~]# kubectl apply -f kubevirt-operator.yaml

namespace/kubevirt created

customresourcedefinition.apiextensions.k8s.io/kubevirts.kubevirt.io created

priorityclass.scheduling.k8s.io/kubevirt-cluster-critical created

clusterrole.rbac.authorization.k8s.io/kubevirt.io:operator created

serviceaccount/kubevirt-operator created

role.rbac.authorization.k8s.io/kubevirt-operator created

rolebinding.rbac.authorization.k8s.io/kubevirt-operator-rolebinding created

clusterrole.rbac.authorization.k8s.io/kubevirt-operator created

clusterrolebinding.rbac.authorization.k8s.io/kubevirt-operator created

deployment.apps/virt-operator created

[root@k8s-master ~]#

[root@k8s-master ~]# kubectl apply -f kubevirt-cr.yaml

kubevirt.kubevirt.io/kubevirt created

[root@k8s-master ~]# kubectl -n kubevirt wait kv kubevirt --for condition=Available

error: timed out waiting for the condition on kubevirts/kubevirt

[root@k8s-master ~]# kubectl edit -n kubevirt kubevirt kubevirt

error: kubevirts.kubevirt.io "kubevirt" could not be patched: Internal error occurred: failed calling webhook "kubevirt-update-validator.kubevirt.io": failed to call webhook: Post "https://kubevirt-operator-webhook.kubevirt.svc:443/kubevirt-validate-update?timeout=10s": dial tcp 10.106.166.169:443: i/o timeout

You can run `kubectl replace -f /tmp/kubectl-edit-3704516472.yaml` to try this update again.

[root@k8s-master ~]#

KubeVirt确认Running

[root@k8s-master ~]# kubectl get pods -n kubevirt -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

virt-api-68d4b788f7-5zl86 1/1 Running 0 41s 10.244.1.19 k8s-node01 <none> <none>

virt-api-68d4b788f7-cpngk 1/1 Running 0 41s 10.244.2.19 k8s-node02 <none> <none>

virt-controller-887fd7878-6f9z9 1/1 Running 0 2m32s 10.244.2.16 k8s-node02 <none> <none>

virt-controller-887fd7878-wfd5q 1/1 Running 0 2m58s 10.244.1.18 k8s-node01 <none> <none>

virt-handler-6mghg 1/1 Running 0 3m14s 10.244.1.17 k8s-node01 <none> <none>

virt-handler-8wv2b 1/1 Running 0 2m31s 10.244.2.17 k8s-node02 <none> <none>

virt-operator-64675bb658-4vw8n 1/1 Running 0 41s 10.244.2.18 k8s-node02 <none> <none>

virt-operator-64675bb658-zxvgf 1/1 Running 0 4m19s 10.244.1.16 k8s-node01 <none> <none>

[root@k8s-master ~]#

等待可用Available

[root@k8s-master ~]# kubectl -n kubevirt wait kv kubevirt --for condition=Available

kubevirt.kubevirt.io/kubevirt condition met

[root@k8s-master ~]#

[root@k8s-master ~]#

部署STS

apiVersion: v1

kind: Service

metadata:

name: nginx

labels:

app: nginx

spec:

ports:

- port: 80

name: web

clusterIP: None

selector:

app: nginx

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: web

spec:

selector:

matchLabels:

app: nginx # has to match .spec.template.metadata.labels

serviceName: "nginx"

replicas: 1 # by default is 1

minReadySeconds: 10 # by default is 0

template:

metadata:

labels:

app: nginx # has to match .spec.selector.matchLabels

spec:

terminationGracePeriodSeconds: 10

containers:

- name: nginx

image: registry.k8s.io/nginx-slim:0.8

ports:

- containerPort: 80

name: web

volumeMounts:

- name: www

mountPath: /usr/share/nginx/html

volumeClaimTemplates:

- metadata:

name: www

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: "my-storage-class"

resources:

requests:

storage: 1Gi

[root@k8s-master ~]# kubectl apply -f vm.yaml

Error from server (InternalError): error when creating "vm.yaml": Internal error occurred: failed calling webhook "virtualmachines-mutator.kubevirt.io": failed to call webhook: Post "https://virt-api.kubevirt.svc:443/virtualmachines-mutate?timeout=10s": context deadline exceeded

[root@k8s-master ~]#

apiVersion: kubevirt.io/v1alpha3

kind: VirtualMachine

metadata:

labels:

kubevirt.io/vm: vm-cirros

name: vm-cirros

spec:

runStrategy: Always

template:

metadata:

labels:

kubevirt.io/vm: vm-cirros

spec:

domain:

devices:

disks:

- disk:

bus: virtio

name: containerdisk

terminationGracePeriodSeconds: 0

volumes:

- containerDisk:

image: kubevirt/cirros-container-disk-demo:latest

name: containerdisk

[root@k8s-master ~]# kubectl get vmis

No resources found in default namespace.

[root@k8s-master ~]#